In 2004 Tim Brennan, David Wells, and Jack Alexander authored a report for the National Institute of Corrections titled Enhancing Prison Classification Systems: The Emerging Role of Management Information Systems. The report was commissioned through a contract with Northpointe Institute for Public Management of Traverse City, Michigan, founded by Wells and Brennan in 1989.

The report’s goals were to explore how then-recent advances in networked computing technology could improve the efficiency of classifying those in prison by risk level. “Basically,” they write in their executive summary, “current methods of prison classification are underutilizing this information technology infrastructure. The vast memory and analytical power of today’s hardware and software offer great potential for improving classification decisionmaking” (page xix).

Brennan et al. describe the work of classifying prisoners as “knowledge work”, as it involves prison staff compiling data from various sources and analyzing it using “implicit mental models and explicit algorithms”. Networked computers could improve productivity of those classifying prisoners by automating portions of the data collection process. They could also allow for more rapid classification of prisoners by prison staff, identifying potential trends or factors that might predict a person’s likelihood to commit new criminal offenses more quickly and accurate than human evaluators, or so they claimed.

These technologies have advanced much further in the intervening years, and Northpointe has offered their services under contract to multiple states, including the Wisconsin of Department of Corrections (WIDOC). They entered into a contract to provide their Correctional Offender Management Profiling for Alternative Sanctions (COMPAS) tool to the WIDOC in 2015 and have remained under contract until at least FY2022. I requested records related to this contract and, after a little under a year, I received them. I will provide these for download in full at the end of this post, but because the records were quite extensive and this is an often overlooked aspect of the US prison system (imo), I thought it would be useful to provide a preliminary summary and analysis.

This issue is also of interest to me in part because it resembles at least in broad strokes the kind of work that I do for libraries, namely sorting, classifying, and describing all manner of published material from zines published in the 1980s to monographic series written by subject matter experts for a specialized, technical audience. This is done according to a dizzying array of description standards and metadata schema (each with their own acronym, of course), all of which must be processed and manipulated by the vendor-provided software where much of this work takes place. Because of this, I appreciate the vast improvements in efficiency that networked computers represent when it comes to classifying, storing, and retrieving information. However, as my e-mail inbox can attest, these technologies are not without their problems.

In my work the potential downside of misclassifying or misdescribing something is often minor. Perhaps a student does not find all the relevant books held by the library on a subject for their class assignment or a researcher is unable to find a specific work they are looking for even though it is held by our institution. There are more serious issues when it comes to the description of marginalized groups in libraries, such as the classification of literature from various parts of Africa alongside the literature of those countries that colonized them (See Classifying African Literary Authors). There are other examples related to other groups, but these hardly invalidate the inherent convenience of computerized library catalogs compared to their printed predecessors. In the case of prisoner classification systems, the risks are much greater: people may be kept in prison longer or required to take intensive psychological treatment programs that aren’t appropriate.

Northpointe acknowledges these risks themselves in their original bid for the contract. One of the risks of classifying prisoners, according to Northpointe, is the risk of “prisoner ‘deterioration’ and ‘prisonization’”. Though they do not define these terms specifically, the text which follows gives a telling suggestion:

These risks have serious consequences for both the institution (idleness, discipline problems) and the community (high recidivism, more alienated offenders). These risks are more likely if prisoners are simply “warehoused” and if the prison fails to match the inmates to needed programs to prepare for reentry to the community.

(Attachment B, page 133)

There is also the risk of litigation stemming from “custody classification errors” or the public relations problems stemming from “high profile crimes” being linked to early release or parole decisions. Conversely, “if agency policies and procedures adopt overly restrictive classifications styles,” they write, “more systematic ‘over-classification’ errors occur … escalating overcrowding”. Combine enough of these errors and it can lead to a “loss of public trust in agencies ability to distinguish high risk from low risk offenders” as well as a “failure to rehabilitate”, which produces “on-going cost escalations” and, again, the potential for costly litigation (ibid., page 133).

So how are these decisions made? Northpointe is rather unambiguous in its assessment of their competition: most systems in prisons and jails “were not developed statistically and have minimal or unknown levels of predictive accuracy”. Their COMPAS system, by contrast, was developed to improve on these efforts so that more information could be gleaned about a particular prisoner as soon as they enter prison custody. Because they market the service to both courts as a way to assess risk after arrest but before trial as well as to prison officials assessing whether someone can be released prior to the completion of their sentence (e.g. on parole), accurate predictions made using existing or minimal additional data is of the utmost importance. “The aim,” they write, “was to use data that was currently available at the earliest stage for new incoming prisoners. These data include the offender’s criminal history and selected demographic and other criminogenic factors” (ibid., page 135).

Through an analysis of “a large [Michigan Department of Corrections] database”, Northpointe claims to have found a number of these “criminogenic” factors that were statistically correlated to “the commission of new infractions”:

- Age at first arrest

- Age at assessment

- Number of probation revocations

- High school graduation

- Criminal thinking

- Educational vocational resources

- Number of mental health commitments (ibid., page 135)

Unfortunately they do not provide a definition of “criminal thinking” in this document, though we will return to the subject later. “Age at first arrest” is also problematic, as being arrested is not the same as being found guilty and yet there seems to be no distinction made here. Furthermore, whether someone is arrested is often the decision of police officers rather than any kind of automatic process.

Nevertheless, “using both training and validation samples,” they write “and two separate statistical methods (Logistic regression and Random Forests), the above six [sic] factors formed an ‘optimal sub-set’ of factors for predicting new infractions.” That they misstate the number of factors when describing their own model in their own document makes me hesitant to take their numbers at face value. In any event, according to Northpointe’s submission their analysis found that both the logistic regression and random forest models had an accuracy of around 70%, meaning it correctly predicted whether additional disciplinary infractions occurred based on these factors (ibid. page 136).

In studying to be a librarian I took a class on data mining. One of the most important lessons our instructor hammered into us throughout the course is that creating the dataset will often comprise over 90% of the work of any given machine learning project. Computers asked to develop a model to predict the likelihood of an outcome will always give you an answer. They will never tell you that there is not enough data or that you are analyzing the wrong kind of problem with a particular method. A computer cannot tell the difference between data representing weather patterns and crop yields or that conveying the results of a psychological questionnaire. It is up to the people both creating the data and evaluating the results to determine how reliable a given prediction is for a particular context.

One of the projects we did in that class involved analyzing genetic sequencing data in an effort to identify potential connections between specific genes and specific attributes in a given animal. For this assignment we were paired with another person in the class and asked to prepare and then analyze a genetic dataset and provide a short impromptu presentation of our findings for the class, mainly to demonstrate we’d chosen an appropriate analysis type and prepared the dataset correctly. I happened to be paired with a woman pursuing her PhD in Animal Sciences. When I said that I found these datasets a little confusing because I lacked a background in genetics or biology, she informed me that when researchers in her field investigated some of the predictions made by models like the one we were practicing with they often found that the predicted connections were often faint or nonexistent.

As interesting as this class was, I am humble enough to recognize the limitations of my knowledge in this area. Luckily because Northpointe has successfully implemented versions of its COMPAS software in other states, their work has attracted the attention of experts in this area. In 2007 Skeem and Louden of University of California-Davis conducted an independent assessment of the COMPAS tool then in place and found it significantly lacking.

“The strengths of the COMPAS”, they write in their conclusion, “are that it appears relatively easy for professionals to apply, looks like it assesses criminogenic needs, possesses mostly homogenous scales, and generates reports that describe how high an offender’s score is on those scales relative to other offenders in that jurisdiction. In short, we can reliably assess something that looks like criminogenic needs and recidivism risk with the COMPAS. The problem is that there is little evidence that this is what the COMPAS actually assesses” [emphasis in original] (Skeem & Louden, page 29).

In addition to critiquing specific factors added to COMPAS, Skeem and Louden also cast doubt on whether COMPAS does actually predict recidivism. “In our view, the reader must wonder why the COMPAS produces no single “risk” score that can be evaluated by independent investigators. Instead, the authors create various ‘Risk Scales’ that change from evaluation to evaluation, and often combine parts of the COMPAS with other variables” (ibid., page 29). In addition to this lack of data, the authors also highlight the fact that there is no evidence COMPAS actually adjusts to changes in criminogenic factors over time. This is particularly important for use in prisons if, for example, completion of treatment programs is considered a factor for someone being granted parole. Because of these issues, Skeem and Louden state that they cannot recommend the COMPAS for application to individual offenders within the California Department of Corrections (ibid., page 6).

From what I can tell, that is precisely how it is being used by WIDOC.

A paper published by Northpointe staff in 2009 responded to these critiques to defend their product and its application in a response paper. It is no longer available on their website, but thanks to the Wayback Machine I was able to download a copy. It opens with a telling acknowledgement: “most of the evidence for the reliability and validity of COMPAS is found in the results of in-house research studies conducted by Northpointe across a variety of jurisdictions and states” (page 2). That is to say, the evidence purporting to show the efficacy of their tools is based on internal data not shared with anyone other than perhaps the agency with which they are under contract. Later on in their response the authors highlight the fact that peer-reviewed papers on COMPAS have subsequently been published, but the citations given for both of these were authored by the same people who authored this response paper. Neither share the underlying data used in their analysis.

They claim that because agency personnel do have access to this data that their analysis “are often subjected to a more thorough vetting than that provided by the editors or peer-reviewed journals” (ibid., page 2). However, it’s important to remember that these agencies are contracting with companies like Northpointe precisely because they do not have the ability or desire to develop their own tools for this kind of analysis. For example, I found no independent analysis by WIDOC staff in the records responsive my request, which did include specific mention of any meeting minutes or deliberations related to the bidding process.

Herein lies the limits of technological “efficiencies” in addressing inherently social problems like crime, punishment, and justice. Vague variables like “criminal thinking” provide ample room for clinical and correctional professionals to conclude that, for example, someone describing the effect of larger social forces on the circumstances of their crime is demonstrating a lack of remorse or unwillingness to accept responsibility for their actions. This is not hypothetical, as we will see later.

Consider one of the features COMPAS claims to aid in analyzing: an “inmate’s behavioral adaptation to prison” to determine if, for example, they could be moved to a less restrictive prison or become eligible for things like work release. Northpointe lists the factors their system uses to determine these “behavioral adaptation ratings”:

- Cooperation with staff

- Respect vs. Disrespect to staff

- Completion of work tasks

- Program successes vs. failures

- Defiant

- Aggressive to staff

- Tries to Con staff [sic]

- Troublemaker with other inmates

- Victimizes weaker inmates

- Quick Temper Etc.

Using this scale, they found “floor officers can reliably assess an inmate on several key behavior dimensions within minutes using this short checklist (e.g. less than 4 minutes)”. They caution that whoever is performing this analysis should know the inmate well enough to provide “a reasonably fair assessment” of their adjustment. These criteria are further refined in order to classify inmates into a number of “behavioral classes”. “The results are very encouraging and we found that the ‘inmate classes’ were validly linked both to prospective disciplinary levels, criminal history patterns, and also to several main criminogenic factors (e.g. criminal personality, criminal attitudes” (Attachment B, page 139).

I think it’s important to focus on a couple aspects of these adaptation criteria. Firstly, the emphasis on how quickly they can be completed by staff. There is little attention paid to establishing any guidance for how long prison staff should know a particular prisoner in order to make these assessments. This is apparently left to the institution or staff themselves to decide. There is precious little discussion of how prison staff supervising those completing these analyses can check the work of their subordinates, though naturally Northpointe does include costs for “training the trainers” in their bid (approximately $11,000 in the most recent contract renewal).

Secondly, the criteria are almost exclusively focused on interactions towards prison staff rather than the thoughts, emotions, behaviors, or actions of the prisoner themselves. When I would visit WIDOC prison I witnessed numerous staff members get visibly angry to the point of shouting at other prison visitors, including the elderly and small children, for very minor issues including moving beyond a taped line on the floor while waiting to be processed or failure to notify the prison in writing in advance that they would be using a wheelchair. Anecdotes are anecdotes, but personally these are not the kind of people I would want making snap judgments about my behavior (“less than 4 minutes”) that could determine whether I spend another year or more in prison.

One might argue that these are implementation problems as opposed to methodological flaws, but the emphasis on staff interaction shows that these criteria have little to do with characteristics of the prisoner and more to do with the attitudes of staff towards prisoners. Of course these factors are not completely absent, but as these bidding materials show even when they are included it is not without issue. In their bid Norhtpointe included some sample COMPAS reentry narratives and bar charts to demonstrate how the tool can be used to evaluate an individual’s risk. Here is a sample:

These are accompanied by a narrative assessment that is meant to elaborate on what some of these factors mean, though confusingly they do not map exactly onto what is being shown in the bar chart. For example, while criminal history (both personal and familial), mental health, substance abuse, and ReEntry Vocation/education are present in both the narrative and the bar chart, all of the factors shown in the chart under Personality/Attitudes are reduced to a single section in the re-entry narrative as “Cognitive Behavioral/Psychological Score”, which in this example shows a score of 10 or “highly probable”. The section of the sample narrative assessment it where a “Cognitive Behavioral/Psychological Statement” could be is literally left blank.

There are training materials for how these COMPAS scales should be completed by prison staff, but one of the supposed benefits of the COMPAS software is that it can be customized to fit a variety of criminal justice settings, from pre-trial release to probation and parole decisions. They suggest that a subset of criteria be used to “triage” all offenders within a probation agents purview, with the full scale used for only “higher risk offenders” (“Meaning and Treatment Implications of COMPAS Score”, page 4).

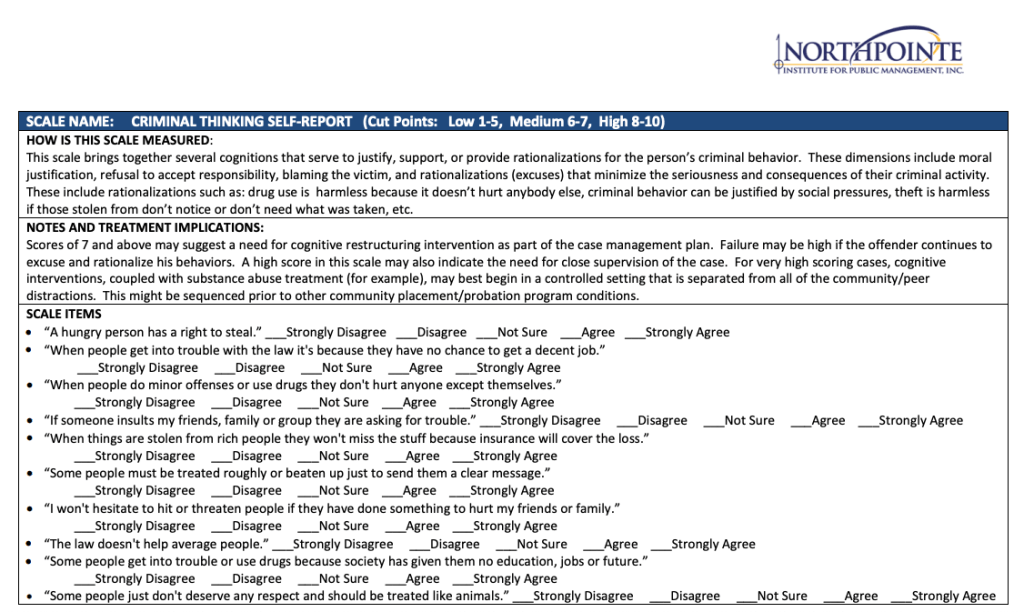

This slide deck also sheds some light on what is meant by “criminal thinking”, which is apparently determined using the questionnaire shown below:

Consider statements like “A hungry person has a right to steal” or “The law doesn’t help average people”. If I were asked for my reaction to these statements I would almost certainly strongly agree. Apparently this means I may be in need of “cognitive restructuring”. If you want a definition of what that means you will have to file an open records request of your own.

Here we should return to where I opened, with Brennan et al. expressing a desire to use networked computers to improve the efficiency and effectiveness of classifying those in criminal custody. The desire to reduce such an inherently complex question as “will someone convicted or accused of a crime commit another crime in the future?” to a set of numbers s implicitly linked to a desire to outsource more and more of this work to computers. After all, computers are indeed better able to evaluate a set of numerical variables to predict a given outcome than a human would be if they attempted to do the same calculations by hand. Appeals for more data by policymakers are usually a request for more numbers to be analyzed, as opposed to non-numerical kinds of data such as oral or written personal histories or the notes from a psychological evaluation conducted by a licensed therapist. Furthermore, those analyzing the data often have more incentives to keep people incarcerated (or at least disincentives for release) than they do moving the opposite direction, and this will invariably color decisions at either the institutional or systemic level.

In spite of these problems, the contract with the WIDOC has been lucrative. In their request to extend their current contract, Northpointe, now called equivant, gives a cost for the licenses and hosting fees for COMPAS at over $930,000. The total cost, which includes project management, technical support, and training, comes in at over $1 million ((“Exhibit_A_-_WI_DOC_Contract_Renewal_Price_Proposal_FY22”). As is common with government contracting, this is far above the cost submitted with the initial bid. In their original cost proposal, Northpointe estimated that the cost over the life of the contract (up to 7 years) to be somewhere between 2 and 3 million dollars in total (“Northpointe_Cost_Proposal-Options_1_2_with_Notes.pdf”).

This brings me to my final point regarding what ultimately led me to request these documents in the first place. Services like COMPAS purport to improve the efficiency and effectiveness of prison operations, but in reality they often reinforce existing systemic issues while also providing plausible deniability in the form of a seemingly objective numerical rubric by which incarcerated people are evaluated. It is much easier for a DOC official to justify keeping someone in prison for any reason if they can point to a score on a chart to demonstrate instead of defend the decision solely on their own words and judgment. I’ve heard from others with loved ones in the WIDOC that they have faced many hurdles trying to request copies of COMPAS evaluations regardless of whether the person incarcerated has given their personal permission.

These systems have a clear impact on the persistence of mass incarceration because of their use in determining when and if someone is released. While the war on drugs and over zealous prosecution have been correctly highlighted as leading to mass incarceration, an often overlooked factor is the length of sentences and the difficulty of being released on parole or other forms of supervised release. Interrogating how systems like COMPAS are used by prison and jail administrators can hopefully address this issue and I hope that by making these records available I can aid in that effort.

There is much more to be found by going through these documents, certainly more than I could hope to cover in one post. There are materials from other vendors who also sought this bid, additional training and sample materials from Northpointe, and specific documents related to how these services operate in women’s prison or those for juveniles. They can be browsed or downloaded using the link below.